Ever found yourself wishing you could check on your Azure environment without having to open up your laptop? There’s an app for that. I’ve been using it for a few years in my lab environment and its a brilliant feature that’s not widely used. Lets delve a little further into shall we?

Overview

The Azure mobile app brings the robust capabilities of Microsoft Azure right to your fingertips, offering unprecedented flexibility and control. Whether you’re troubleshooting on the move, need to respond to an alert immediately, or simply want to check on the status of your resources. Below we cover some of the functionality of the app.

Azure Services

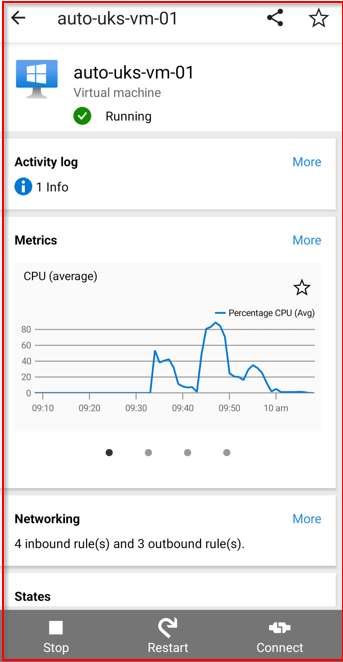

Virtual Machines: Quickly view the status of your VMs, including their operational state (running, stopped, etc.), and perform essential actions like starting or stopping VMs directly from the app.

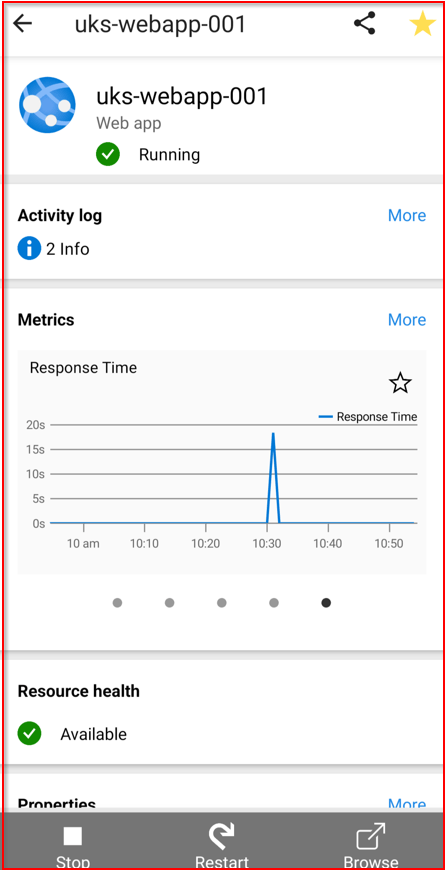

Web Apps: Access a summary of your deployed web applications, monitor their health, and manage basic functionalities like restarts or scaling operations.

SQL Databases: Keep an eye on your databases’ performance metrics, usage statistics, and receive alerts for any operational issues or performance bottlenecks.

Application Insights: Gain insights into your application’s performance and health, with access to telemetry data including request rates, response times, and failure rates.

Latest Alerts: Receive notifications and alerts regarding the health and status of your Azure resources. This section helps you stay informed about any issues that need your attention, allowing for quick responses to keep your services running smoothly.

Recent Resources: A convenient list of the Azure resources you’ve recently accessed or modified. This feature offers quick navigation back to these resources for further monitoring or management.

Service Health: View the current health status of Azure services globally or in specific regions. This section provides updates on any ongoing issues, planned maintenance, or advisories that might affect your services.

Cloud Shell: Direct access to Azure Cloud Shell from the app, allowing you to run PowerShell or Azure CLI commands for advanced management tasks. This powerful feature brings the flexibility of command-line tools to your mobile device.

Resource Groups: Manage and view your resource groups, offering a structured view of the resources under each group. This helps in organizing and accessing related resources efficiently.

Microsoft Learn: Stay up-to-date with the latest Azure tutorials, courses, and learning paths from Microsoft Learn. It’s a great way to improve your Azure skills and knowledge on the go.

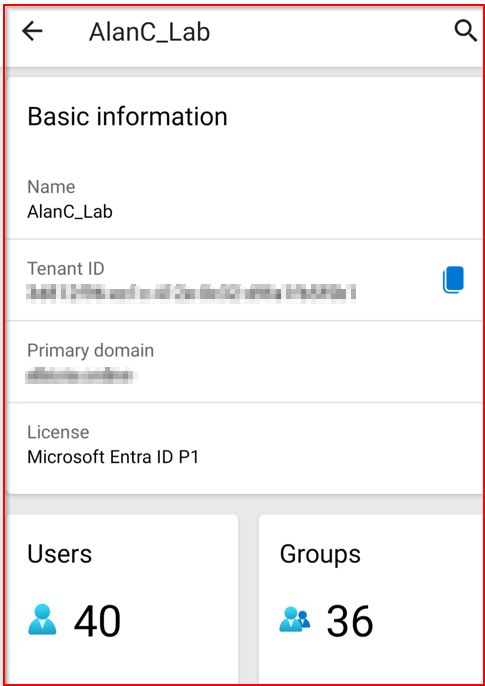

Microsoft Entra ID: Access to identity and access management features through Microsoft Entra, helping you manage users, permissions, and access to your Azure resources securely.

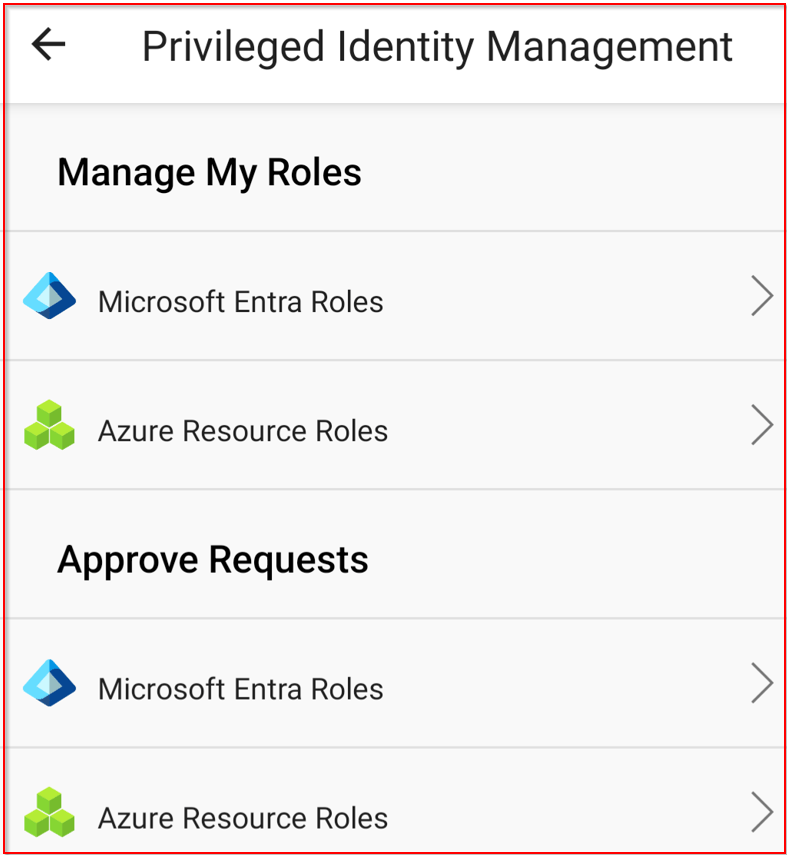

Privileged Identity Management (PIM)

- Activate Roles: Easily activate eligible assignments for PIM roles.

- Request Renewals: Request renewals for roles that are expiring.

- Check Pending Requests: Monitor the status of pending PIM requests.

Favorites: Customize your home screen by adding your favorite Azure resources for quick access. Ensuring the tools and resources you use the most are always just a tap away.

The Azure mobile app’s home screen is designed to be a powerful, customizable dashboard that brings the most important aspects of your Azure environment directly to your fingertips, enhancing your ability to monitor and manage your cloud resources efficiently, even while on the move.

Why should you use the mobile app?

The app is designed to offer convenience, flexibility, and real-time management capabilities, from the comfort of your mobile device. some of the key reasons include:

On-the-Go Management

The ability to monitor and manage your Azure resources without being tied to a desktop computer is incredibly convenient. Whether you’re traveling, in meetings, or away from your desk, the Azure mobile app ensures you’re always connected to your cloud environment.

Immediate Incident Response

With real-time alerts and notifications, the Azure mobile app enables you to respond to and resolve issues as soon as they arise. This rapid response capability can significantly reduce downtime and improve the reliability of your services.

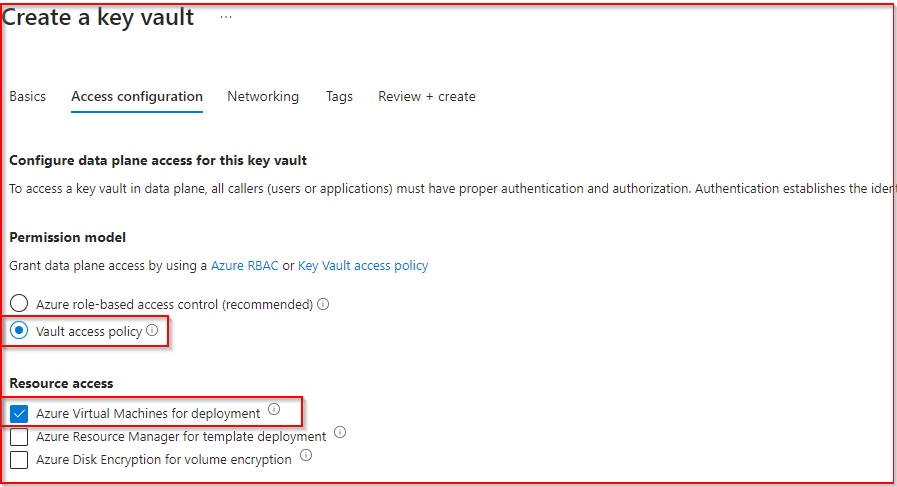

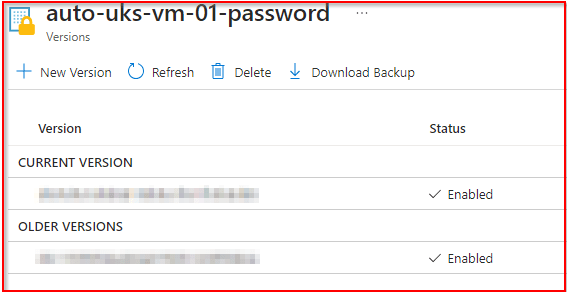

Secure Access

Security is a top priority, and the Azure mobile app supports secure sign-in through Azure Active Directory (AAD) and Multi-Factor Authentication (MFA). This ensures that access to your Azure resources is both convenient and secure, protecting your environment from unauthorized access.

Additional Information:

https://azure.microsoft.com/en-gb/get-started/azure-portal/mobile-app

https://learn.microsoft.com/en-us/azure/azure-portal/mobile-app/overview